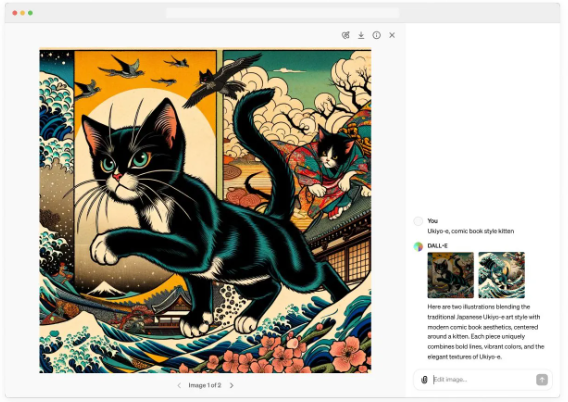

April 02, 2024 – OpenAI has recently announced the introduction of a brand-new editing interface for DALL-E 3, enabling users to fine-tune generated images based on their descriptions after the initial text-to-image generation process.

The DALL-E editor offers two primary editing methods:

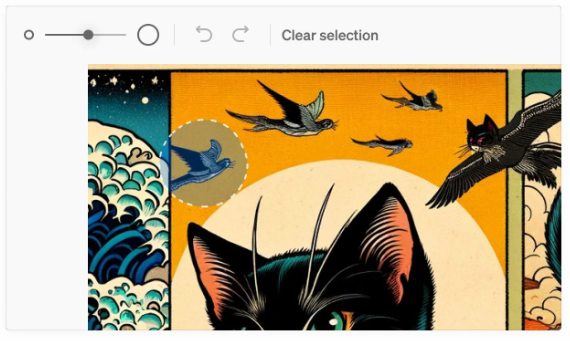

Selective Area-Based Editing:

Following the generation of an image by DALL-E 3, users can select specific regions within the image and enter prompt words in the chat interface to request micro-adjustments from DALL-E 3.

Conversational Editing:

After DALL-E 3 generates an image, users can directly describe their desired edits in the chat window without selecting a specific area. This method is suitable for editing and adjusting the entire image.

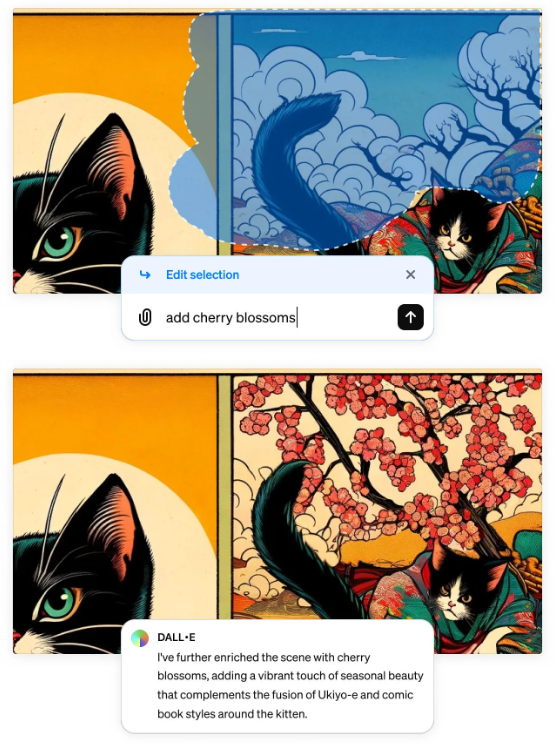

According to OpenAI, the introduction of this editor further refines the image generation process, allowing users to optimize generated images in greater detail. The ability to edit DALL-E outputs opens up possibilities for various applications, such as:

Enhancing the precision or realism of specific elements within an image.

Introducing new visual elements to existing images.

Modifying the style of generated images.